Ścieżka nawigacyjna

Using Zero Density Reality Engine™ with AW-UE150 and AW-UE100 for Virtual Studio applications

Zero Density Reality Engine™

Enables access to a powerful, high-quality and affordable AR system for your virtual studio needs!

Key features of Zero Density Reality Engine™

Najważniejsze cechy

| Position Data notification |

| FreeD protocol via serial/IP |

| Wider angle of view (75.1°) |

| 4K acquisition |

| Simultaneous UHD and HD outputs |

The AW-UE150 and AW-UE100 Panasonic broadcast-quality PTZ cameras provide Position Data Notification (PTZF) and support the FreeD protocol to be compatible with virtual studios and augmented reality applications. Due to the adoption of FreeD protocol, the AW-UE150 and AW-UE100 are compatible with the ‘Reality Engine’ software from Zero Density. The combination of Panasonic AW-UE150 and AW-UE100 PTZ cameras and Reality Engine makes AR easily accessible and affordable.

The V-Log function was implemented in the Reality Engine starting with version 2.9, and increases the picture quality acquisition from Panasonic cameras including the UE150, UE100, AK-UC4000 and VariCam LT. The above cameras have the ability to output V-Log, meaning that the video signal can be processed more natively within Zero Density Reality Engine, providing a better output in the keyer, and increases the overall quality in the virtual studio production.

Using Zero Density Reality Engine™ with AW-UE150 and AW_UE100 for Virtual Studio applications

The AW-UE150 and AW-UE100 provide real-time positioning to the virtual reality engine™. The camera is then “tracked” and the FreeD data is sent over serial or IP links.

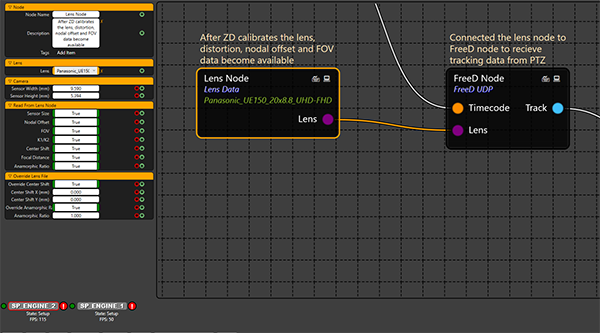

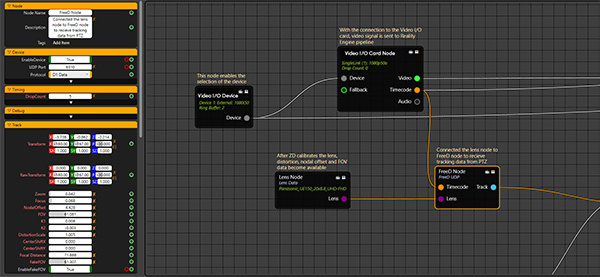

Lens node

The lens of the camera is already calibrated in the Zero Density system and simply needs to be selected in the Lens node. The camera can of course be used both in Full HD or 4K. In short, using the UE150 and UE100 in combination with Zero Density Reality Engine™ allows many more users to get access to the AR and VR world thanks to this powerful, high-quality and affordable solution.

Tracking node

Reality Engine™ from Zero Density is the ultimate real-time node-based compositor enabling real-time visual effects pipelines featuring video I/O, keying, compositing and rendering in one single software in real-time. As the most photo-realistic virtual studio production solution, Reality Engine™ provides its client the tools to create the most immersive content possible and revolutionize story-telling in broadcasting, media or cinema industry

Reality Nodegraph Pipeline

Reality Engine™ uses Unreal Engine by Epic Games, the most photo-realistic real-time game engine, as the 3D renderer. With the advanced real-time visual effects capabilities, Reality ensures the most photo-realistic composite output possible.

Reality Keyer™ provides spectacular results for keying of contact shadows, transparent objects and sub-pixel details like hair.

As a unique approach in the industry, Zero Density’s Reality Engine™ composites images by using 16 bit floating point arithmetics. Having the control of full compositing pipeline, all gamma and color space conversions are handled correctly. By using this technology, the real world and the virtual elements are blended perfectly.

Take Back the Stadiums: Virtual Fans, Striking Advertisement and More Entertainment

Leveraging high viewership with latest technology

Sports returned to the stadiums, but left audiences at home. This results in high ratings, but misses the traditional atmosphere. Why not use the current circumstances to create new opportunities for sport events in general? View an example of how to leverage high viewership with the latest technology here from Zero Density & Dreamwall with the AW-UE150 PTZ camera.

Galeria produktów

Zasoby pokrewne

Showing 12 of 18

Sorry there was an error...

The files you selected could not be downloaded as they do not exist.

You selected items.

Continue to select additional items or download selected items together as a zip file.

You selected 1 item.

Continue to select additional items or download the selected item directly.

Udostępnij stronę

Share this link via:

Twitter

LinkedIn

Xing

Facebook

Or copy link: